Note: AI helped me write this because my brain entered read-only mode at 5am. Ok not everything; The typos and questionable life decisions are still mine.

The Real Barrier Isn’t Technical

A few weeks ago, I started experimenting with AI agents.

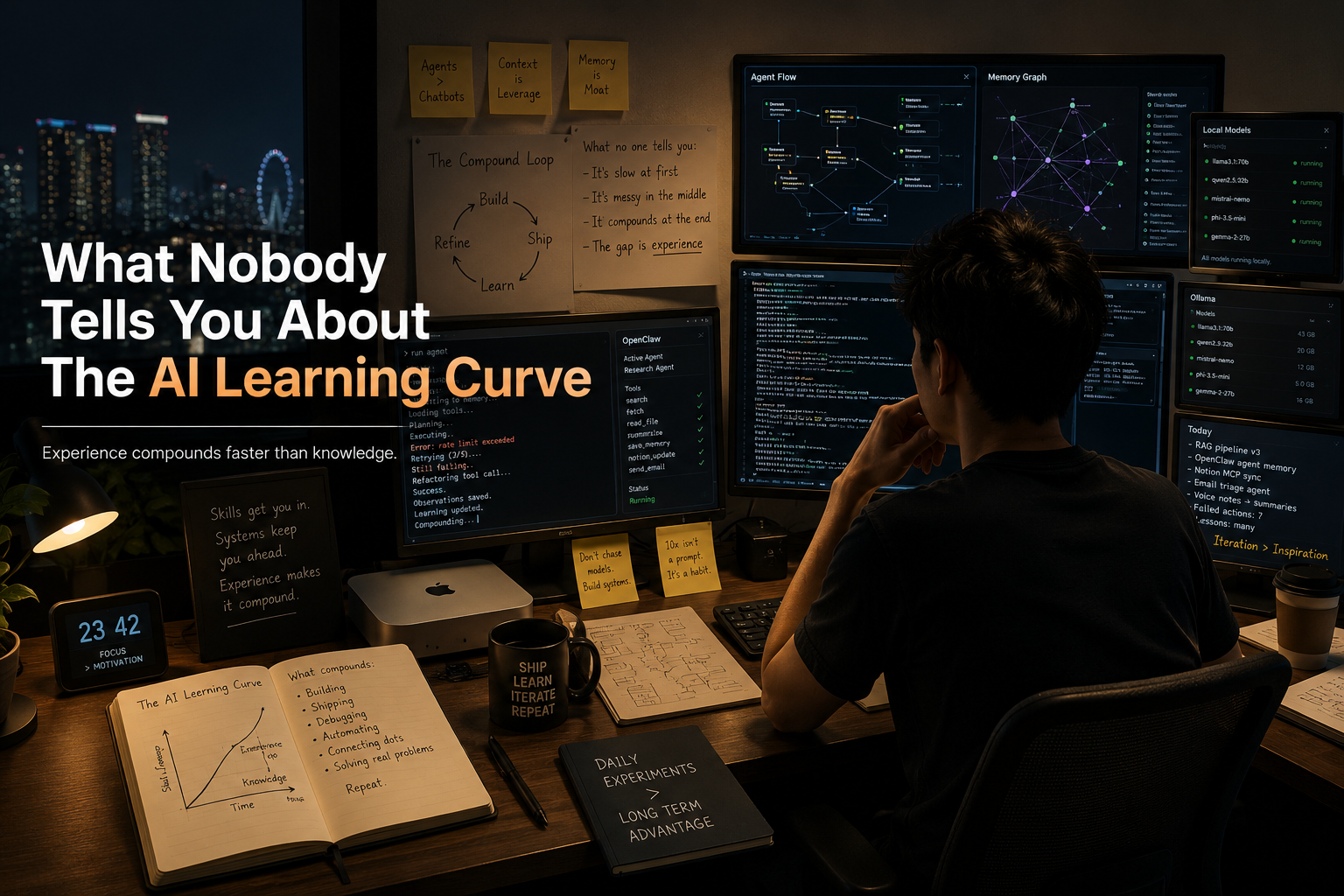

Late at night, after my kids had gone to sleep, I would sit down and try to get something working. First, it was wiring everything together and getting the agent platform to function reliably. Then came a calendar agent that could read my emails (whether through a separate agent inbox or not, with its own trade-offs that I will not go into here). A morning briefing that would arrive before I even woke up. A memory system that could retain what I had read, so I did not have to keep everything in my own head.

I had read that OpenClaw was unstable and difficult to get working consistently. I wanted to see for myself. Setting it up pushed me to understand the underlying concepts more deeply: how agents route requests to different models, how memory persists across sessions, and how automation chains are orchestrated. The knowledge and resources were already out there, shared by countless others who were also experimenting, failing, learning, and gradually figuring things out along the way.

It helps that I have a software engineering background. I can read code, understand APIs, debug when things break. But I’ve found that this isn’t the barrier most people think it is. The tools are surprisingly accessible once you commit to the mess. The real barrier is a mindset: the belief that you should only start once you understand the landscape.

I used to think that way. I don’t anymore, and here’s why.

This Space Moves Faster Than Any I’ve Seen

Things change daily. New features ship every week. For the first time in my career, I find myself questioning whether I should update to the latest version — because latest doesn’t always mean best experience. Things break in this fast-moving space. A model you relied on gets deprecated. A feature that worked yesterday has different behaviour after an upgrade.

This is the nature of a space that’s still being built. The people waiting for stability are waiting for something that doesn’t exist yet.

Every night I’d spend time checking where things failed. A cron job that silently errored. A model that stopped responding. A memory system that lost context. Slowly, I built up a set of services that held together. Then something shifted — I started using the system to improve itself. Automated health checks. Drift detection. Fallback chains that kick in when something goes down.

Now those late nights are fewer. I spend less time fighting fires and more time sleeping. The system doesn’t run itself, but it runs itself better than it did a month ago. And that trend, less manual firefighting over time, is the signal I’m watching.

The gap compounds every day. Everyone I know who started experimenting early has something the rest of us do not: operational intuition. They know which models suit their workflows best, and which ones consistently fall short for their specific use cases. They have seen tools that worked flawlessly on Tuesday suddenly break by Thursday. They have learned to treat updates cautiously, to test before upgrading, and to expect friction as part of the process. This is not the kind of knowledge you gain from reading documentation alone. It is knowledge earned through repeated experimentation, failure, and adaptation.

For you, the takeaway is simple: every week you wait-and-see is a week someone else is building the mental model you’ll need later. The cost isn’t the subscription fees. It’s the missed learning cycles.

The Threshold for Trying Has Collapsed

Before AI, if I wanted a tool that didn’t exist, the path from idea to working thing was long. Coding, hosting, maintaining. Or buying something that almost fit and adapting your workflow to it.

Today, the distance from “I want this” to “I have something that mostly works” is measured in hours. The iteration cycle is conversational. I can describe what I want, see the result, and adjust. The question quietly shifted from “Can I build this?” to “Why not build this?”

There was an initial building phase where everything was exciting. Ideas came fast and I brought them to life — a daily briefing, a calendar agent, a memory system. Each new thing worked well enough to be useful, and the speed felt exhilarating.

Then I hit a different phase. I ran out of obvious things to build. The question became: what next? That’s when I shifted from building new things to looking at patterns — examining workflows, finding friction, improving what already existed. The exciting build phase gave way to an operations phase. It’s less glamorous but more impactful. Small improvements to running systems compound faster than new features.

For you, the build phase doesn’t last forever, and that’s okay. The real value comes from refining what you already have. Look for patterns, not features. Improve workflows, not tools. And if you’ve ever had a recurring problem — a manual process that annoys you, information you wish arrived automatically, a decision you keep remaking — the threshold for automating it is lower than you think. Try the worst version first. Improve it tomorrow.

The Economics Changed Everything

This surprised me the most. Everything is API-based billing now. Costs are real and they add up. Models are flooding the market, dozens of them, each with different strengths, pricing, and trade-offs. And there are many ways to run them: cloud APIs, local models, hybrid setups.

The skill I’ve developed isn’t prompt engineering. It’s resource allocation — knowing which problem belongs to which tool. Expensive reasoning models for complex decisions. Cheap, fast models for routine summarisation. Local models for tasks that don’t need cloud access. Fallback chains because models change pricing or go down without warning.

I now spend more time routing tasks to the right tier than I do writing prompts. Getting this wrong means burning through your budget or getting poor results for tasks that deserve better. Getting it right means your tools run reliably at a cost that makes sense.

Start with the cheapest option that gets the job done. If possible, try running models locally as well. Understand their limitations, what it actually takes to operate them on-premises, and gain an appreciation for the organizations managing these systems in-house at scale. Upgrade only when you genuinely hit a ceiling. Most practical and useful automations do not require the most advanced or expensive model to be effective.

The Process Is Iterative, Not Architectural

Everything I built started rough. The daily briefing went through five format changes before it felt right. The calendar agent started as a script that forwarded emails, and slowly learned to understand context, check availability, and ask the right questions. The memory system took three redesigns.

Each iteration taught me something I couldn’t have planned for. The polish comes from use, not from up-front design. Don’t try to build the perfect version. Build the version that almost works, then make it better tomorrow. The thing that makes you cringe six months from now is the proof that you learned.

Build Self-Improvement Into the System

One of the most interesting decisions I made was to build a self-improving loop into the system. Every night, it runs a check — looking at what’s working, what’s degrading, what could be better. It learns my patterns and adjusts. I didn’t design this upfront. It emerged from watching things drift.

The concept of a system that improves itself without human intervention sounds futuristic. In practice, it’s simple: define what good looks like, measure against it, and give the system permission to change. The hard part is trusting it enough to let it try.

For you, think about feedback loops early. A system that tells you how it’s doing is more valuable than a system that just does its job. Build the mechanism that catches drift before drift becomes failure. The goal isn’t to eliminate all late nights upfront. It’s to make them rarer over time.

What This Means for You

The people who will benefit most from this shift aren’t the ones with the best technical backgrounds. They’re the ones who start before they feel ready.

The window for being an early learner won’t stay open forever. As these tools mature, the messy parts will get abstracted away. New users will have polished interfaces and guided workflows. That convenience comes at a cost: they won’t develop the deep fluency that only comes from wrestling with the primitive version.

The people who learn now will understand not just how to use the tools, but why they work, where they fail, and how to bend them toward unusual problems. That understanding is the durable advantage — and it’s only available to those who start before it’s easy.

Closing

I don’t have a framework or a manifesto. I have a collection of patterns that mostly work, and a growing conviction that the people waiting for AI to stabilise are paying a price they can’t see yet.

The question used to be “Can I build this?”

The better question is “Why not try?”